Learn What Opportunities and Challenges Are Involved With Edge Computing

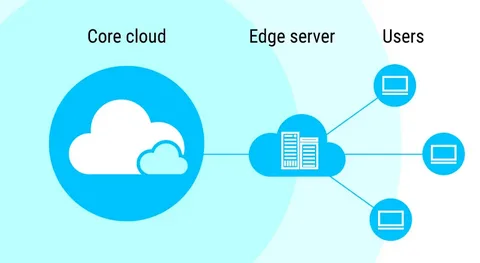

Edge computing refers to the practice of processing and storing data locally in contrast to transmitting data to centralized data centers or the cloud. It reduces latency and enhances the performance of business apps and services.

If we look closely, there are various challenges and opportunities associated with edge-type computing.

What is Edge computing?

Edge computing is a distributed computing approaches where processing is carried out nearer to the data source and end users, or at the “edge” of the network. As a result, processing can happen more quickly and with less latency. There is no need to send data to a central data center or cloud.

Moreover, edge type of computing is growing popular in sectors like healthcare, manufacturing, and intelligent cities where real-time processing and quick response are essential. In contrast to transmitting data to centralized data centers or the cloud, it refers to the practice of processing and storing data locally. As businesses look to reduce latency and enhance the performance of their apps and services, this strategy has grown in popularity.

How does Edge computing work?

With the use of Edge Computing, data processing can happen locally on closer-to-the-data-source hardware, such as routers, gateways, and sensors. Data is transferred to a centralized data center or cloud in classic cloud computing models for processing and storage, which can cause delays and increased network traffic.

Moreover, applications that implement the internet of things like automatic cars and others can get highly benefitted from this type of computing. Edge computing can decrease network latency, enhance data security, and facilitate quicker decision-making by data analysis locally. Also, it can reduce the volume of data that must be sent to centralized cloud servers, which can minimize expenses and enhance system efficiency.

Opportunities provided by Edge computing

- One of the key benefits of edge type of computing is reduced latency or the time it takes for data to move from its source to a processing or storage point and back.

- This is particularly crucial for real-time applications like driverless cars, industrial automation, and healthcare systems.

- By lowering the possibility of network interruptions or outages, such types of computing can also increase the reliability of applications.

- Organizations can make sure that crucial operations continue to run even if their network connection is disrupted by processing data at the edge.

- Moreover, it can lower the costs of transporting significant volumes of data across wide areas.

- With the use of this technology, companies can save money on network connectivity costs and lessen the need for expensive data center equipment by processing and storing data near where it is created.

- By lowering the latency involved with transmitting data to and from a centralized data center, this computing system can enhance application performance.

- This can lead to quicker reaction times; more streamlined user interactions, and improved overall productivity.

Challenges involved in Edge Computing

- Edge computing has the potential to increase security vulnerabilities, especially if the edge devices are not adequately protected. This can involve unlawful access, data breaches, and other sorts of cyber attacks.

- It can be challenging to manage and monitor its infrastructure, especially when the number of endpoints and devices increases.

- Also, it could be necessary to make a large investment in IT resources and experience. This computing technology can hamper data management and governance.

- Companies are required to make sure that data is appropriately gathered, processed, and kept while also adhering to legal obligations and privacy guidelines.

- Moreover, there is a need for standardization and compatibility across many devices, platforms, and manufacturers as this type of computing continue to develop. This can be difficult with the variety of edge computing technologies and methods.

Overall, Edge computing services are an optimum choice when it comes to managing IT resources and data. It can save costs in some situations, but it can also be costly to develop and maintain. This can be especially true in settings where a high number of devices must be handled and watched after.